Discretionary Trees: Understanding Street-Level Bureaucracy via Machine Learning

Authors: Gaurab Pokharel¹, Patrick J. Fowler², and Sanmay Das¹

Affiliations: ¹Virginia Tech, ²Washington University in St. Louis

Venue: Proceedings of the AAAI Conference on Artificial Intelligence (AAAI 2024)

Overview

Street-level bureaucrats (caseworkers, triage nurses, etc.) implement policy in real time while exercising judgment in individual cases. That discretion can be valuable—allowing exceptions when context matters—but it can also introduce inequities if applied inconsistently.

This project uses machine learning to answer three practical questions:

- Simple rules: How much of real decision-making can be summarized as short, human-usable heuristics?

- Consistency: When decisions go beyond simple rules, are they still procedurally consistent?

- Discretion effects: When discretion happens, who gets it—and does it appear outcome-improving?

Data and Setting

We use administrative data from the St. Louis Homeless Management Information System (HMIS), covering household characteristics and assigned interventions during 2007–2014, when assignment was not fully formulaic.

We focus primarily on the tradeoff between:

- Emergency Shelter (ES) (less intensive)

- Transitional Housing (TH) (more intensive)

Method

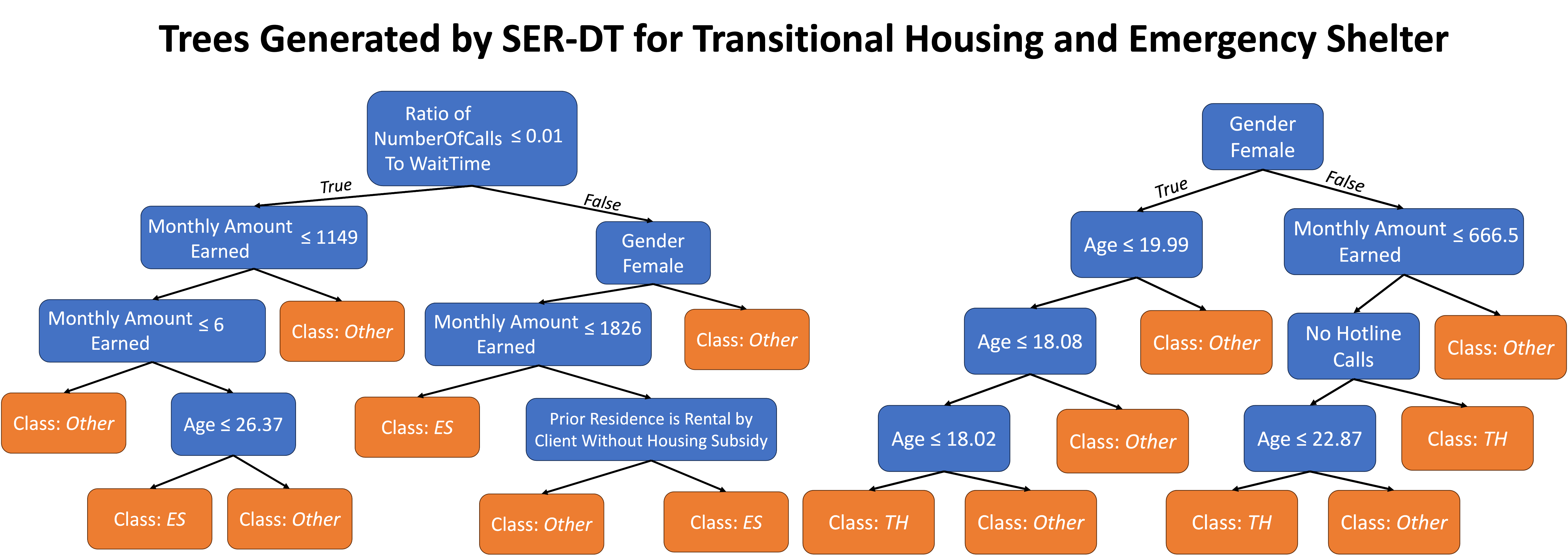

1) “Default heuristics” via short decision trees

We learn short, constrained decision trees that approximate the kind of quick heuristics a caseworker could plausibly apply in “easy” cases.

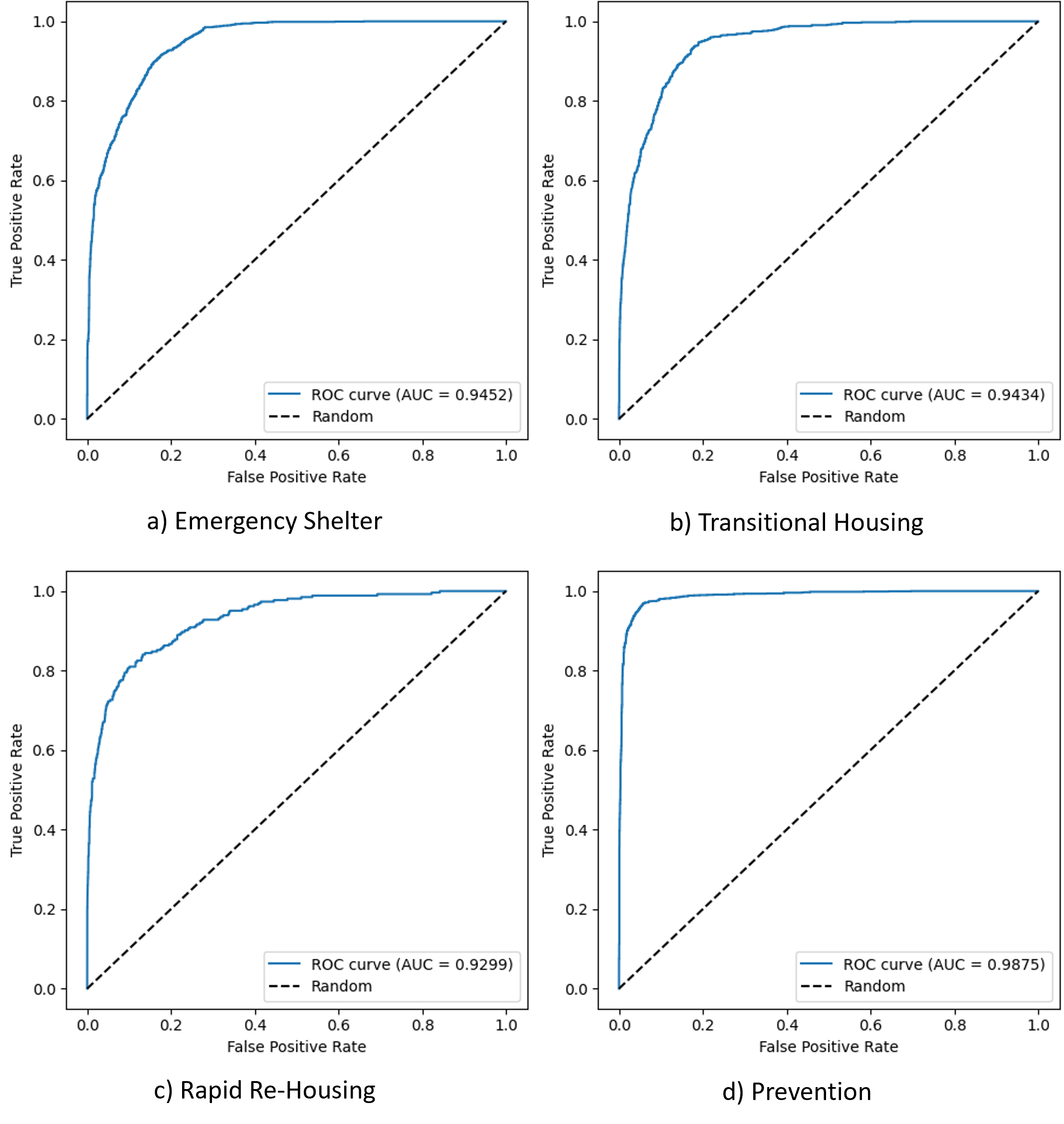

2) Consistency via a high-capacity model

We train a stronger predictive model (e.g., gradient-boosted trees) to test whether caseworker decisions are consistent even when not captured by the short heuristics.

3) Operationalizing discretion

We treat discretionary decisions as those where the actual assignment differs from what the short-tree heuristic would have predicted—capturing “beyond-heuristic” judgment.

Key Findings

A) Many decisions are explainable by simple rules

Short trees capture a meaningful share of assignment decisions, consistent with the idea that caseworkers rely on heuristics for many cases.

B) Beyond heuristics, decisions are still highly consistent

A more flexible model predicts assignments extremely well, suggesting caseworkers are procedurally consistent rather than arbitrary.

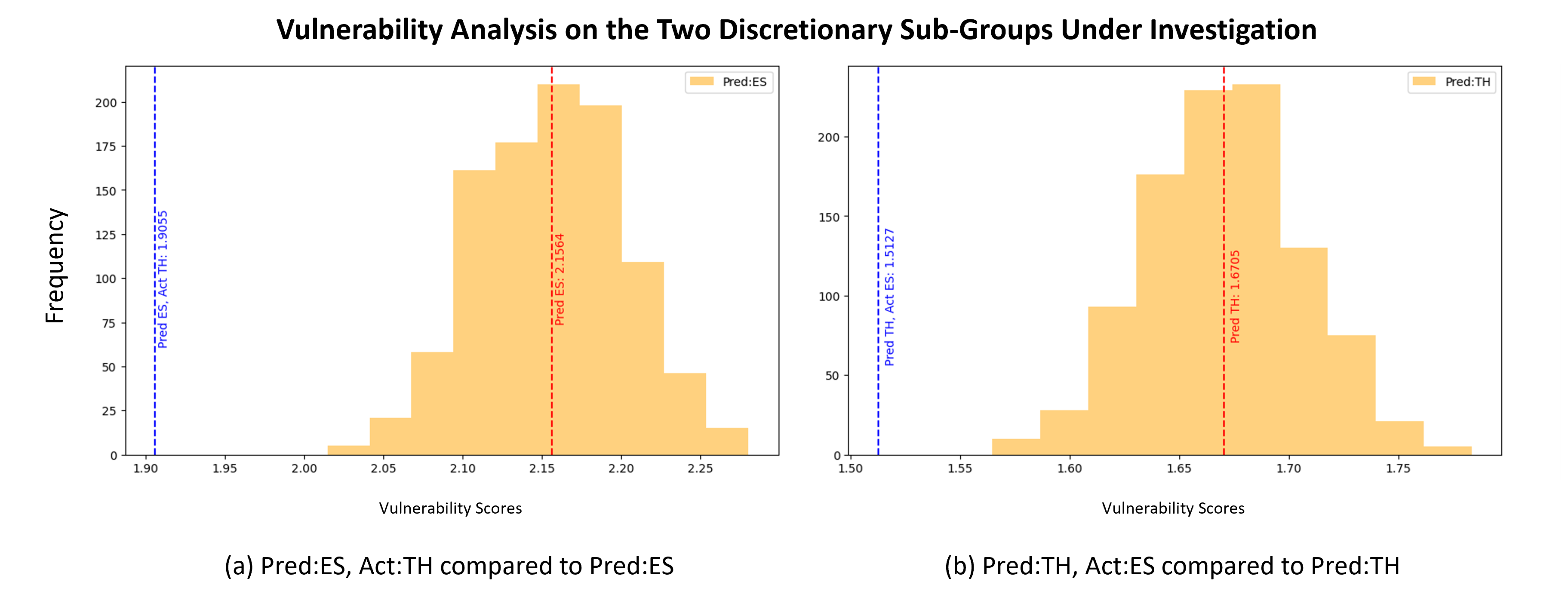

C) Discretion is targeted and not random

When discretion occurs, it tends to be applied to lower-vulnerability households rather than the most vulnerable.

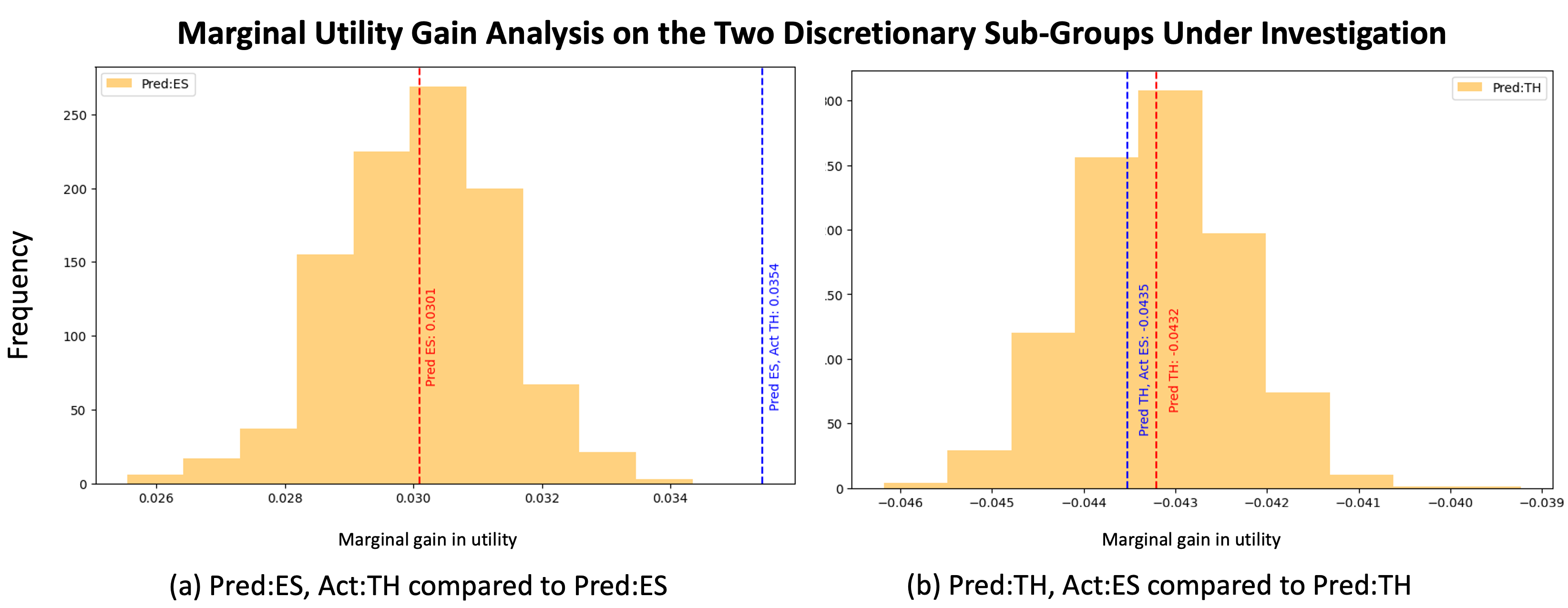

D) Discretionary upgrades align with higher marginal benefit

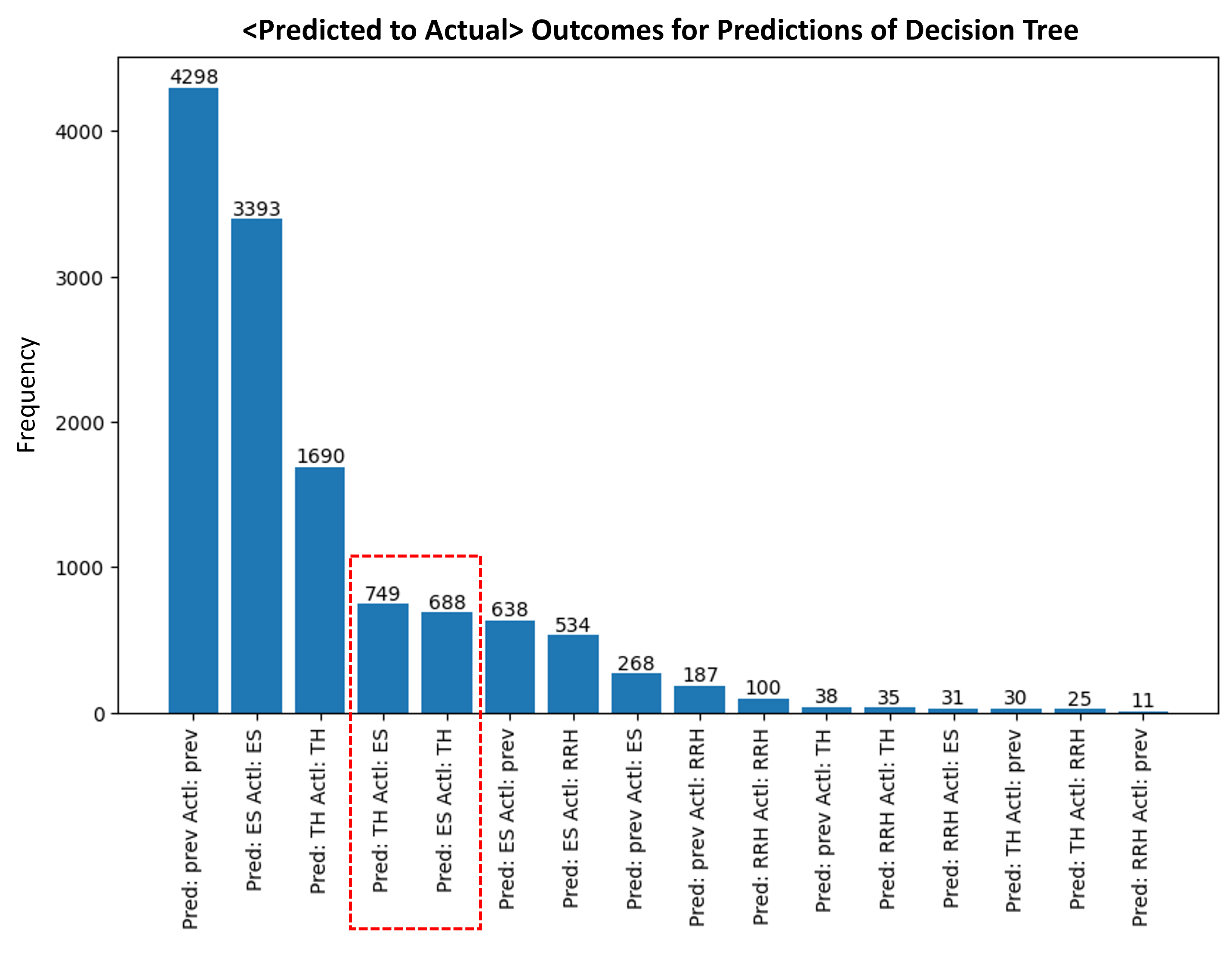

Discretionary moves from predicted ES → actual TH are associated with higher expected marginal benefit than random reassignment, while discretionary TH → ES does not show a comparable loss.

E) A compact way to visualize “discretion” in the dataset

A nice at-a-glance graphic is the predicted-vs-actual distribution (the “where mismatches happen” plot).

Why this matters for decision support

This is evidence for a “human-in-the-loop” design story: simple rules explain a large portion of decisions, and discretion appears to be applied in structured ways that (at least on average) align with improved outcomes for certain cases. That motivates decision support tools that make heuristics explicit and support/monitor discretion, rather than replacing frontline judgment.

Citation

@article{Pokharel_Das_Fowler_2024,

title={Discretionary Trees: Understanding Street-Level Bureaucracy via Machine Learning},

volume={38},

ISSN={2374-3468, 2159-5399},

url={https://ojs.aaai.org/index.php/AAAI/article/view/30236},

DOI={10.1609/aaai.v38i20.30236},

number={20},

journal={Proceedings of the AAAI Conference on Artificial Intelligence},

author={Pokharel, Gaurab and Das, Sanmay and Fowler, Patrick},

year={2024},

month=mar,

pages={22303–22312}

}

This work was partially supported by the NSF (IIS-1939677, IIS-2127752) and by Amazon through an NSF FAI award.